I went to a Spotify webinar last week. The topic was how they moved to fully agentic programming. Not "we added Copilot to VS Code" but a real operational shift where AI agents write and test production software.

The thing I kept thinking about afterward wasn't the AI. It was everything that had to already be in place before any agent touched the codebase.

The documentation thing

Spotify built Backstage.io over years. It's their internal developer portal, with services, APIs, ownership, and runbooks all in one place. Agents need this kind of context to work. Without it they'd be guessing what the auth service is called or who owns the payments pipeline.

Backstage gave them that. Not because Spotify was thinking about AI. Because large organisations have to document things or they fall apart.

The architecture thing

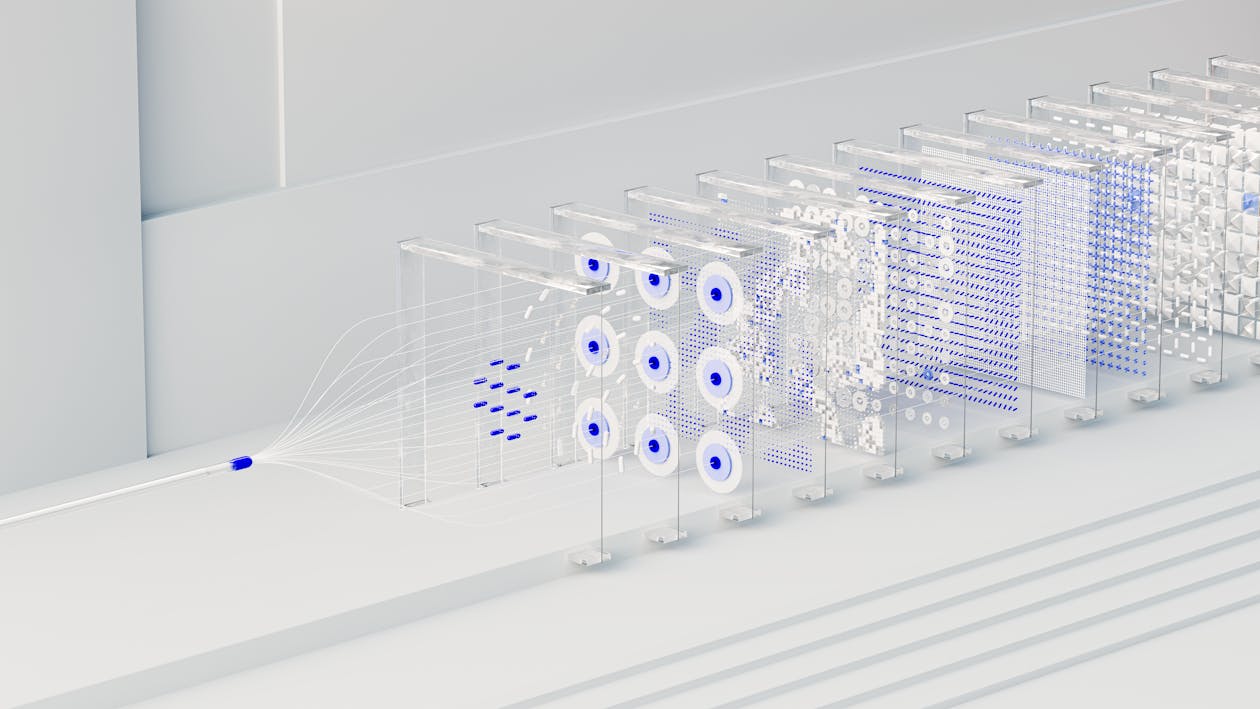

Spotify has been on microservices for twenty years. Small services with clean contracts between them. None of that got built for AI either, but an agent has a much easier time with one service and a known API than with a monolith it has to spelunk through.

The experimentation thing

Features ship behind flags and A/B tests by default. When an agent generates something new, it doesn't land on all users at once. It rolls out to a slice, gets measured, and either grows or gets pulled. Fast iteration on generated code only works if you can pull it back before anyone notices.

The environment thing

Every dev and test environment at Spotify is provisioned automatically. Lint runs. Tests run. Pipelines fail loudly when something breaks.

Infrastructure built to catch human mistakes catches agent mistakes just as well. A regression introduced by an AI fails the same test it would fail for a human developer. Years of engineering discipline turned out to be good preparation for something nobody was specifically preparing for. The transition to agents ended up being smoother because of it.

When an agent generates bad code, the same guardrails that protect against human error fire. The safety net doesn't care who wrote the code.

The YAML thing

On the agent side, having config files encoding the coding style makes a real difference: naming conventions, error handling patterns, what to use and what to avoid. Without this, agents produce code that works but doesn't fit. With it, the output looks like something a senior engineer wrote. Close enough, anyway.

It's a small thing that makes a big difference. A well-fed agent produces a clean first draft. An agent flying blind produces something that technically passes review but leaves a mess to clean up six months later.

What this adds up to

Spotify can now set agents on problems at different levels: drafting features, writing tests, doing exploration. Their existing infrastructure filters out a lot of what goes wrong. Prototyping that used to take a sprint now takes hours. Engineers review and approve rather than write from scratch.

They didn't build AI infrastructure. They built good infrastructure, and it worked.

One thing that hasn't changed: a developer still owns every line. AI writes a draft. You read it, decide if it's right, and ship it with your name on it. That's the deal. The machine doesn't take the blame when something breaks.

AI-generated doesn't mean unreviewed. If you can't explain what the code does, it doesn't go in.

The short version

Five things were already in place when Spotify turned the agents loose:

- Backstage, so agents know what services exist and who owns them.

- Microservices, so they're reasoning about small surfaces instead of monoliths.

- An experimentation platform, so generated code can roll out behind flags and pull back fast.

- Automated dev and test environments, so bad code fails the same gates a human would hit.

- Coding-style configs, so the output looks like the rest of the codebase.

None of it was built for agents. All of it turned out to matter.

We do this too

Our projects are built the same way. Documented, automated, consistent. When we started using AI agents, the transition was easier because the foundation was already solid. Good infrastructure tends to work for humans and machines alike.

It means we can do AI-assisted development that's actually fast and actually clean. The infrastructure handles the gatekeeping. The agents do the heavy lifting. If you're thinking about what that looks like on a real project, talk to us.